This article explains how to scrape Craigslist apartment listings using Visual Basic and the HtmlAgilityPack library. We'll go through each part of the code.

First install HtmlAgilityPack via NuGet.

Import the namespaces:

Imports System.Net

Imports HtmlAgilityPack

HtmlAgilityPack lets us parse HTML documents.

Set the URL to scrape:

Dim url As String = "<https://sfbay.craigslist.org/search/apa>"

Use WebClient to download the HTML:

Dim webClient As New WebClient()

Dim html As String = webClient.DownloadString(url)

Load the HTML into an HtmlDocument:

Dim htmlDoc As New HtmlDocument()

htmlDoc.LoadHtml(html)

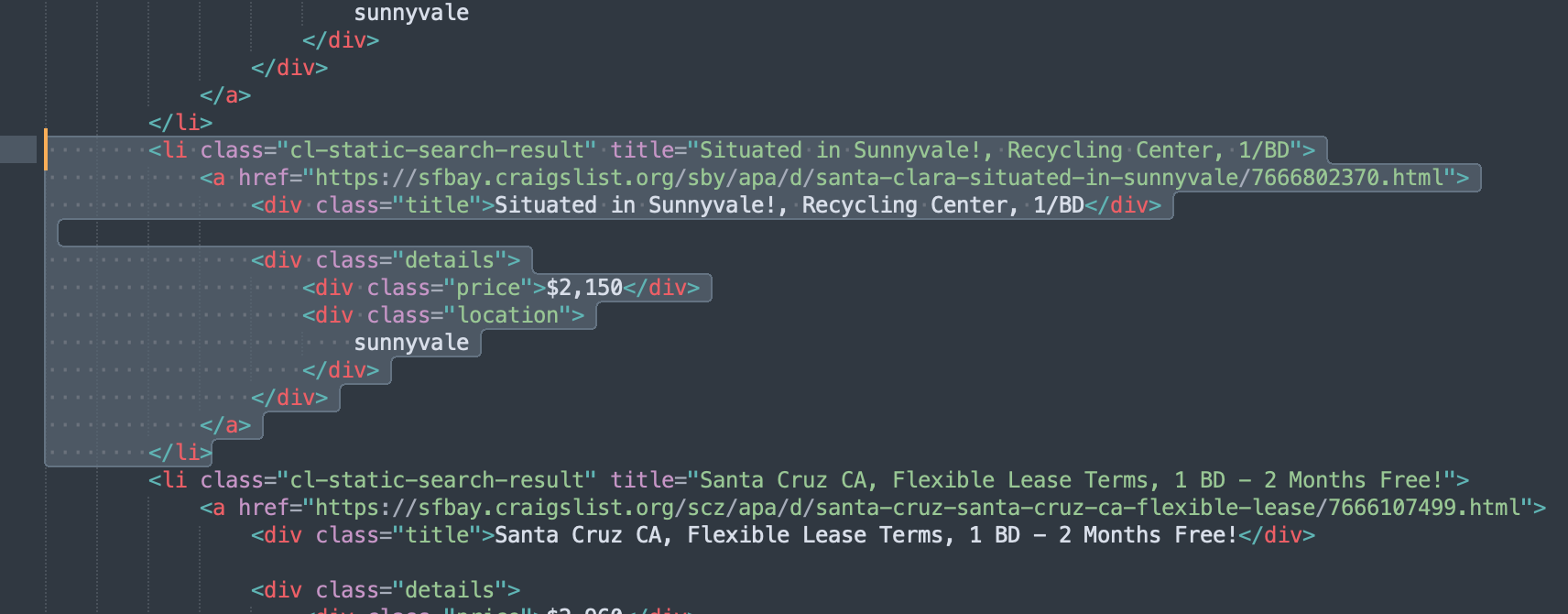

If you check the source code of Craigslist listings you can see that the listings area code looks something like this…

You can see the code block that generates the listing…

<li class="cl-static-search-result" title="Situated in Sunnyvale!, Recycling Center, 1/BD">

<a href="https://sfbay.craigslist.org/sby/apa/d/santa-clara-situated-in-sunnyvale/7666802370.html">

<div class="title">Situated in Sunnyvale!, Recycling Center, 1/BD</div>

<div class="details">

<div class="price">$2,150</div>

<div class="location">

sunnyvale

</div>

</div>

</a>

</li>its encapsulated in the cl-static-search-result class. We also need to get the title class div and the price and location class divs to get all the data

Find all listing nodes with XPath:

Dim listings = htmlDoc.DocumentNode.SelectNodes("//li[@class='cl-static-search-result']")

Loop through listings and extract info:

For Each listing As HtmlNode In listings

Dim title As String = listing.SelectSingleNode("./div[1]").InnerText

Dim price As String = listing.SelectSingleNode("./div[2]").InnerText

Dim location As String = listing.SelectSingleNode("./div[3]").InnerText

Dim link As String = listing.SelectSingleNode("./a").GetAttributeValue("href", "")

Console.WriteLine(title & " " & price & " " & location & " " & link)

Next

The full VB.NET code:

Imports System.Net

Imports HtmlAgilityPack

Dim url As String = "<https://sfbay.craigslist.org/search/apa>"

Dim webClient As New WebClient()

Dim html As String = webClient.DownloadString(url)

Dim htmlDoc As New HtmlDocument()

htmlDoc.LoadHtml(html)

Dim listings = htmlDoc.DocumentNode.SelectNodes("//li[@class='cl-static-search-result']")

For Each listing As HtmlNode In listings

Dim title As String = listing.SelectSingleNode("./div[1]").InnerText

Dim price As String = listing.SelectSingleNode("./div[2]").InnerText

Dim location As String = listing.SelectSingleNode("./div[3]").InnerText

Dim link As String = listing.SelectSingleNode("./a").GetAttributeValue("href", "")

Console.WriteLine(title & " " & price & " " & location & " " & link)

Next

This walks through scraping Craigslist listings in VB.NET.

This is great as a learning exercise but it is easy to see that even the proxy server itself is prone to get blocked as it uses a single IP. In this scenario where you may want a proxy that handles thousands of fetches every day using a professional rotating proxy service to rotate IPs is almost a must.

Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

Hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

The whole thing can be accessed by a simple API like below in any programming language.

In fact, you don't even have to take the pain of loading Puppeteer as we render Javascript behind the scenes and you can just get the data and parse it any language like Node, Puppeteer or PHP or using any framework like Scrapy or Nutch. In all these cases you can just call the URL with render support like so:

curl "<http://api.proxiesapi.com/?key=API_KEY&render=true&url=https://example.com>"

We have a running offer of 1000 API calls completely free. Register and get your free API Key.